Update: See my new post introducing Peekaboo.

Here’s a post I’ve been meaning to write for a while, but just never got around to it.

As a Waterloo Engineering student, we have to complete a final year design project under the guidance of a supervisor in groups of three.

What have I been making?

My project is called Peekaboo. It uses facial detection and recognition to allow a user to find out more about people around them.

I’ve been working on this for about 5 months now with my group members. We’ve made sufficient progress and are now at a stage where I can talk about our systems architecture and some of the design decisions that we made.

System Overview

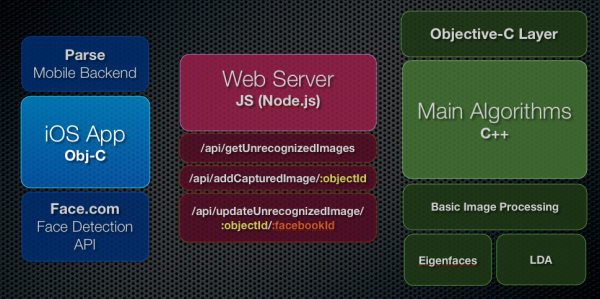

Peekaboo is comprised of 3 components, shown in the diagram below.

iOS App + Face Detection + Parse

We have a native iOS app that uses Parse for it’s mobile backend. We’ve been very happy with Parse, and it was painless to set up. Face detection is performed on the device itself through Face.com’s API. We went with Face.com over OpenCV’s Face detection due to higher accuracy and the availability of more data. Face.com is able to give us JSON back with eye positions, mouth posiitions, head tilt and yaw, gender, and more.

I strongly believe user experience should be important to any project. We use a lot of great open source iOS UI components available at Cocoa Controls.

Web Server on Heroku

We wrote a web server in Node.js deployed over Heroku to act as an API. Once again, Heroku makes this painless to set up and deploy. The web server is the mediator used by the iOS app and the desktop C++ code. The web server talks to Parse to get information and feeds it to the C++ code (and vice versa). It uses standard authentication.

The web server has a MySQL database which we usually query instead of directly querying Parse. This way, we stay below the rate limits. Parse is only queried when there is a face that needs recognition.

- We found Sequelize to be a good ORM for MySQL (available as an npm module)

- We found Request to be a useful module for simplifying HTTP Requests.

Facial Recognition Algorithms

I won’t lie, I’m not the best at C++. I find web languages and Objective-C a lot easier to code in. Nonetheless, when doing Face Recognition, we had to use C++. It’s mainstream and fast. Of course, we went with OpenCV, one of the most popular computer vision libraries out there (See my post on how to set up OpenCV on Mac).

Like I said, we don’t use OpenCV for Face detection. We use it to work with images, do some basic image processing (histogram equalization) and use OpenCV’s Eigenfaces implementation. We have also implemented another Facial Recognition algorithm known as LDA (Linear Discriminant Analysis). This was done mostly through reading research papers and contacting people smarter than us. Google Scholar is a useful resource. LDA gives us a 10-15% improved recognition rate over EigenFaces.

Communication Layer

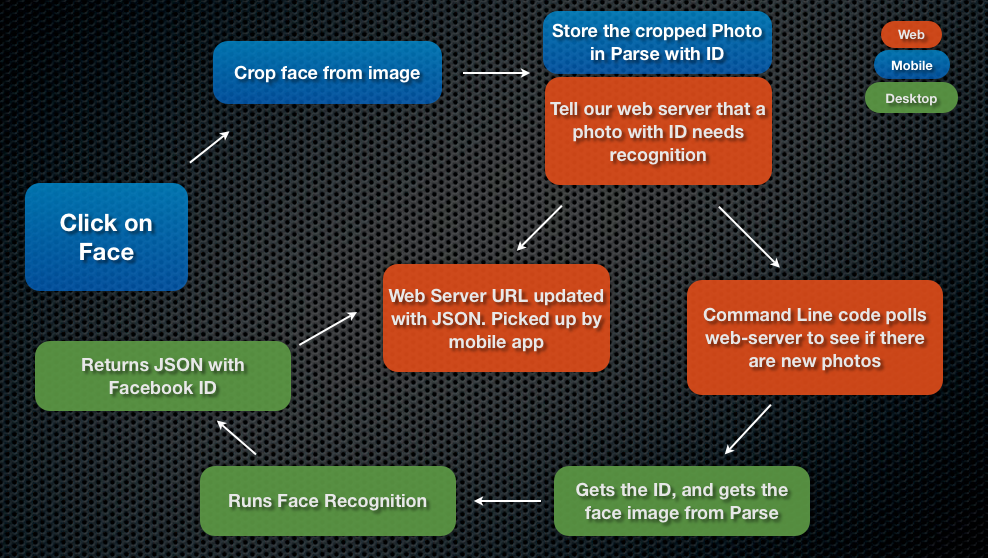

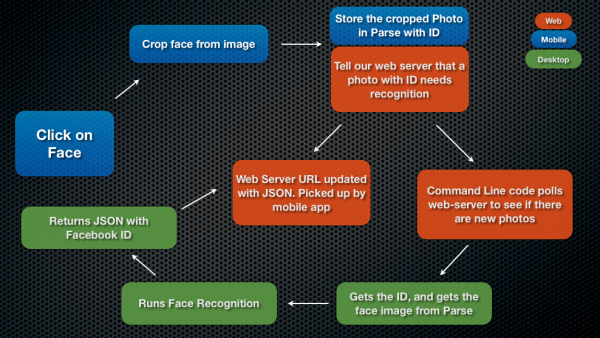

Since I’m not a big C++ guy, I wrote a pretty thin Objective-C layer on top of the C++ code that communicates with the web server and figures out when to execute the facial recognition code. I was able to leverage a lot of my iOS knowledge for this, using standard libraries such as ASIHTTPRequest and SBJSON (ASIHTTPRequest has recently been stopped being maintained, but for our project, it does the job).System diagram of how facial recognition works

Final Thoughts

Some parts of our code are a little hacked together. This is partly due to the time crunch we are under and partly due to the fact that we are still learning. However, I am pretty proud of what we have achieved so far. We can accurately (over 70%) recognize a person who has been trained by our system (database size is 70 people currently). We’ve used $0 on this project, which is a testament to how open and amazing our software engineering industry is. We have learned a lot through out this process. I’ll put another post up with a live demo sometime soon.

If you have questions, ask them below!

hi,

i am working on face recognition using opencv c++, i completed the the detection part when i come to recognition part it is displaying only the database persons name only where as when other unknown person comes in front of the cam it has to display unknown person but it is displaying the database person name.

please help me out on how to rectify.

Hey, am currently working on a final year project based on building a face Recognition system for access control. I want to use open source tools to do this. I recently landed on OpenCV but my supervisor is very doubtful about it, and says he doubts it can do face detection. (In my project I want to identify and verify a user).

Any Help. Thanks alot

Hello. I am working on an idea for a facial-recognition-type software. I’ve been sworn to secrecy by my partner but the idea I have is analogous to this:

Create a database of “ideal” body types. Lets say one is a marathon runner. Another is a body builder. Another is a supermodel.

Now, let’s say I am in the business of recruiting ideal marathon runners today. Tomorrow I will be recruiting for supermodels. Let’s assume that there is a direct correlation between good marathon runners and certain ratios of body measurements. Example: The geometry between the tips of left and right shoulders compared to the placement of the belly button indicates that this person is in the 90th percentile for good marathon runners and, thus, worthy of recruitment.

(yeah, I know, sounds weird but if I tell you what this actually is, it will make perfect sense – it has nothing to do with people)

I need a piece of software, on a mobile app, that will allow me to take a frontal picture of a person, compare it to an ideal, and help me reach a decision to recruit that person or not. If I can make this work, there are millions of people in one particular industry that would pay dearly for this.

I hope this makes sense. Is this something you would be interested in? If so, contact me.

congratulations on your acquired venture! Have stumped into the same problem. I’ currently trying to implement face authentication system that makes use of the open source libraries. I don’t really know where to start. Point is I do not need face detection but I need detection + recognition (for authentication purposes). Any advice you could provide me ? Some starting points maybe?

Many Thanks

Paul

HI

Thanks for sharing the above knowledge. It is helpful. I’m recently looking some help on the face recognition system.

I have few questions to ask your advise.

1) I plan to work in C#, but i hardly find any source from that. The whole idea was by just using web (user upload image picture) and hopefully C# engine will do the comparison. It is possible and workable with this concept?

2) During the process of recognition, is there any way that every human face with a standard range of code for final result. Example: Jamsh face recognition result is 3444567, Kral with 3444570. So both with the range of 3444500 ~ 3444599 and result is both have some kind of faces look alike. It is recognition can work in this way?

I’m really appreciated if you can share about this further.

Thanks

Best Regards

Desmond